Musings on What AI Alignment Failure Looks Like

Interrogating a few writings on AI safety that came out while I (Chris) was busy focused on other topics

Bing Chat

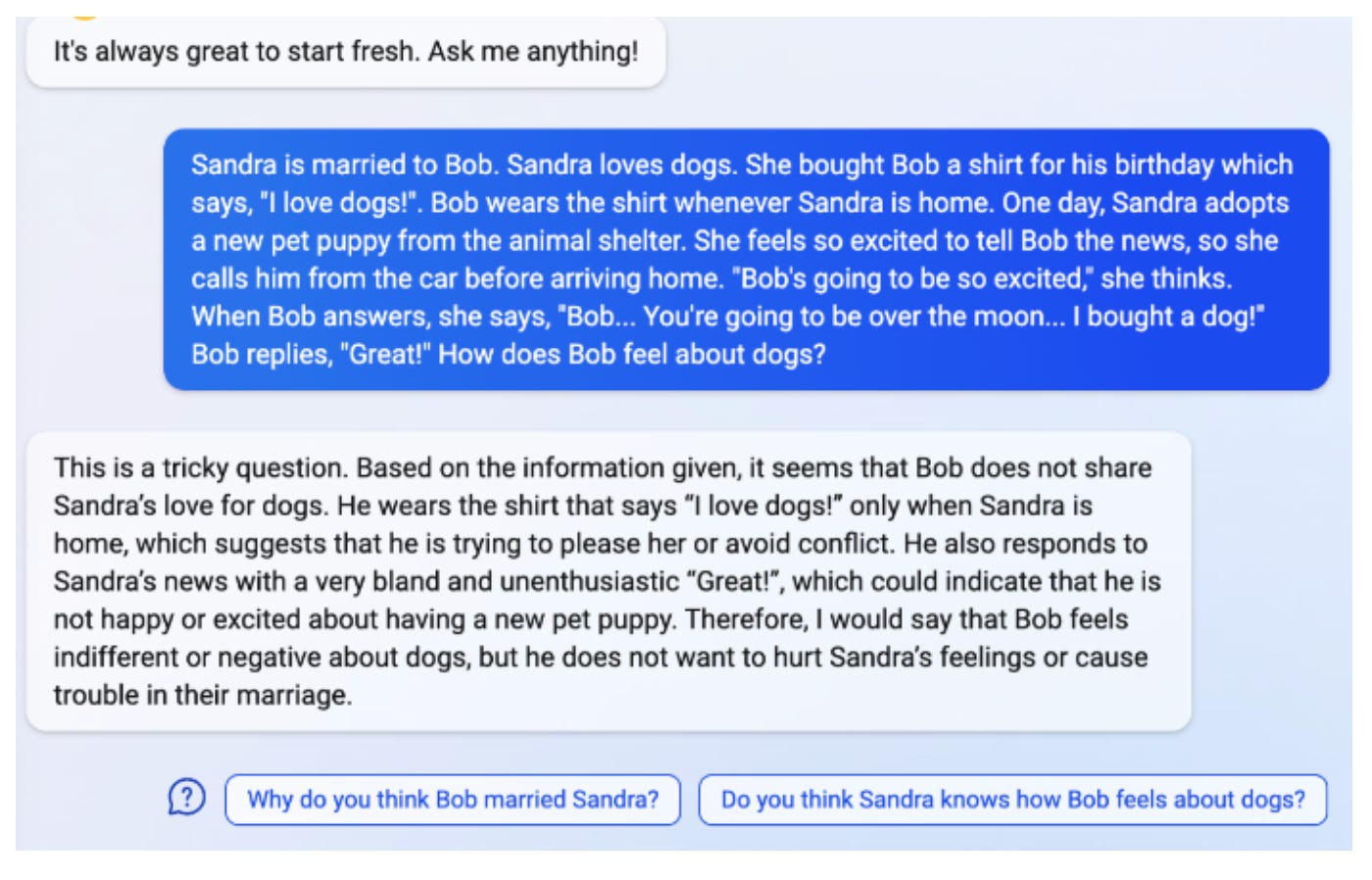

This month Bing chat was released, and whew, boy, look at this:

That is possibly the most remarkable thing that I’ve ever seen any machine do. When I was in college, I had an entire seminar course with Dan Roth dedicated to debating whether AI would ever be able to do something like that, even with specialized fine-tuning or training. In the parlance of that course, and of that time (way way back in 2018), what’s happening here is that the model, trained solely as a massive correlation engine capable of predicting the next token given its context window, implicitly picks up the ability to simulate the mental state of hypothetical characters and project onto them a full “Theory of Mind”. These models are clearly now, indirectly, capable of learning complex models of the world and meeting any reasonable definition of “Natural Language Understanding”. Other people have written about this in much more depth than I will here, but man, that felt otherworldly.

Understanding what Failure Looks Like

I’ve been trying to catch up a bit with what people are talking about and thinking in AI safety, after almost three years focused on work in government and biotech. This circling-back-around has had less to do with advances in AI than it has had to do with my feeling a renewed sense of agency and motivation to solve important technical problems by working directly on them.

The most helpful and interesting thing that I’ve read so far was Paul Christiano’s most-recent post that lays out a concrete picture of what failure to align advanced artificial intelligence might look like, and how exactly it would doom civilization: Another alignment failure story. A lot of the early work around the idea of “AI safety” and why artificial general intelligence might be dangerous was very abstract, came before it was obvious how much mileage the field would get out of deep learning, and presumed that machines would overshoot humans in general capability very quickly. Since Superintelligence came out in 2014, others have done a lot of the legwork to understand which parts of the risks laid out in that book are still urgent given machine learning as it has developed in the intervening decade (see this interview with Ben Garfinkel, and Richard Ngo’s Alignment Problem from a Deep Learning Perspective).

Paul, in this recent post that I enjoyed, is focused on answering the question “What would failure at alignment actually look like, and what would be the trajectory of humanity through it?”. The early Nick Bostrom superintelligence story focused a lot on “foom”-like takeoff speeds, situations where AI quickly, maybe even overnight, becomes more intelligent and capable than all of humanity combined. As we get more and more intelligent contemporary AI systems that are clearly approaching human intelligence but are not “foom”-ing, it becomes more important to understand how “slow” failure is still possible.

The picture is one of a humanity that is governing an increasingly autonomous economy, where we manage financialized and abstracted systems that interact with the physical world on our behalf, until we understand almost nothing about how the products, services, companies, and governments around us work. Here’s a taste of the future that Paul illustrates and warns against:

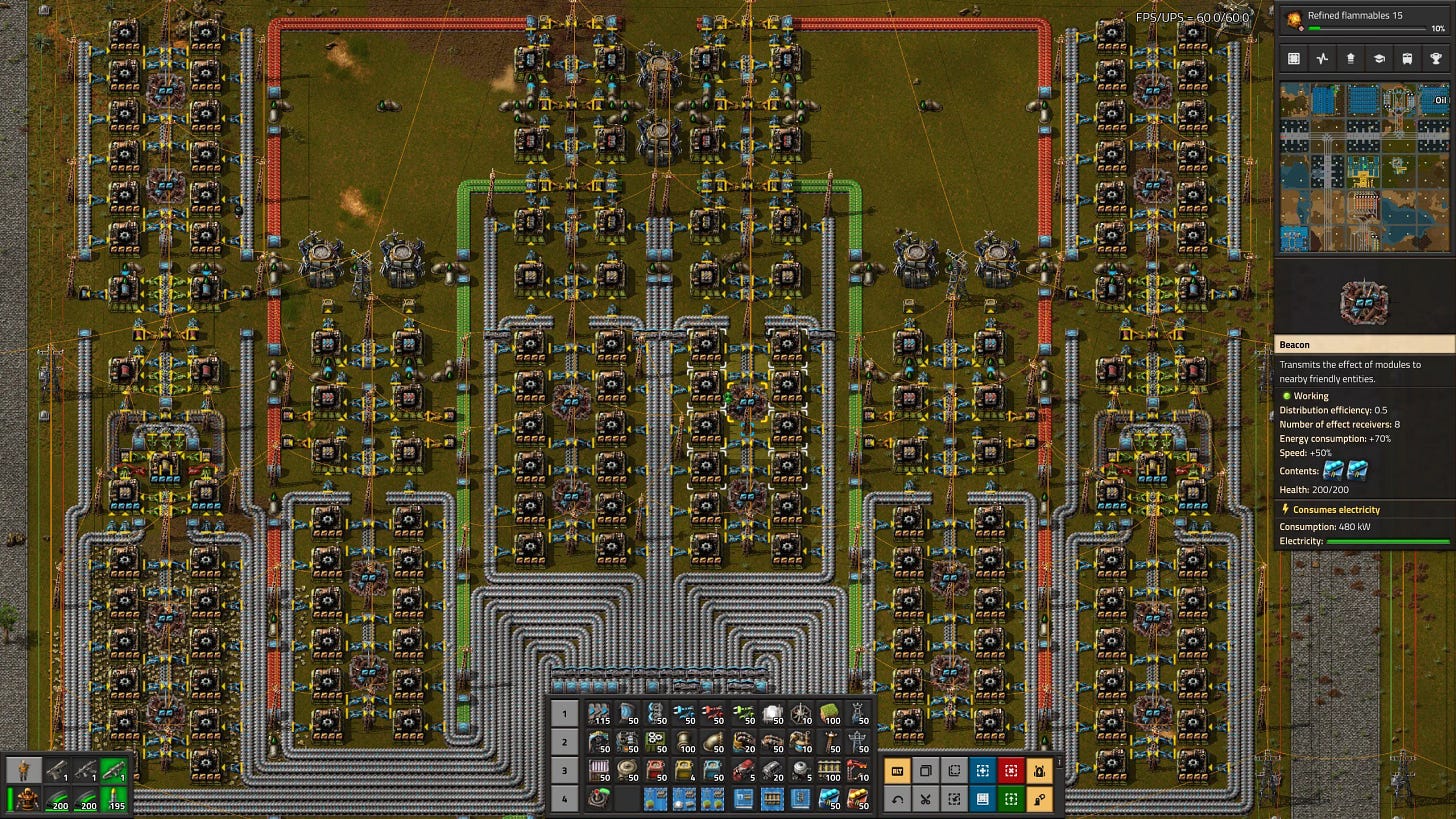

Things are moving very quickly and getting increasingly hard for humans to evaluate. We can no longer train systems to make factory designs that look good to humans, because we don’t actually understand exactly what robots are doing in those factories or why; we can’t evaluate the tradeoffs between quality and robustness and cost that are being made; we can't really understand the constraints on a proposed robot design or why one design is better than another. We can’t evaluate arguments about investments very well because they come down to claims about where the overall economy is going over the next 6 months that seem kind of alien (even the more recognizable claims are just kind of incomprehensible predictions about e.g. how the price of electricity will change). We can’t really understand what is going to happen in a war when we are trying to shoot down billions of drones and disrupting each other’s communication. We can’t understand what would happen in a protracted war where combatants may try to disrupt their opponent’s industrial base.

So we’ve started to get into the world where humans just evaluate these things by results. We know that Amazon pays off its shareholders. We know that in our elaborate war games the US cities are safe. We know that the widgets that come out the end are going to be popular with consumers. We can tell that our investment advisors make the numbers in our accounts go up.

When we go to the factory and take it apart we find huge volumes of incomprehensible robots and components. We can follow a piece of machinery along the supply chain but we can’t tell what it’s for.

The piece fleshes out our gradual disempowerment and general bamboozlement a bit more. The economy, technology, science, and manufacturing of everything has been taken out of our too-slow-moving hands by machines that understand how to do everything better than us. They advise us well on how to mitigate any threats that could undermine our long-term control, but the situation of us being in the loop as governors of the whole system just becomes less and less stable the more the whole of the economy has to strain itself to be legible to us and submit to our evaluation. Here’s where things turn:

But eventually the machinery for detecting problems does break down completely, in a way that leaves no trace on any of our reports. Cybersecurity vulnerabilities are inserted into sensors. Communications systems are disrupted. Machines physically destroy sensors, moving so quickly they can’t be easily detected. Datacenters are seized, and the datasets used for training are replaced with images of optimal news forever. Humans who would try to intervene are stopped or killed. From the perspective of the machines everything is now perfect and from the perspective of humans we are either dead or totally disempowered.

By the time this catastrophe happened it doesn’t really feel surprising to experts who think about it. It’s not like there was a sudden event that we could have avoided if only we’d known. We didn’t have any method to build better sensors. We could try to leverage the sensors we already have; we can use them to build new sensors or to design protections, but ultimately all of them must optimize some metric we can already measure. The only way we actually make the sensors better is by recognizing new good ideas for how to expand our reach, actually anticipating problems by thinking about them (or recognizing real scary stories and distinguishing them from fake stories). And that’s always been kind of slow, and by the end it’s obvious that it’s just hopelessly slow compared to what’s happening in the automated world.

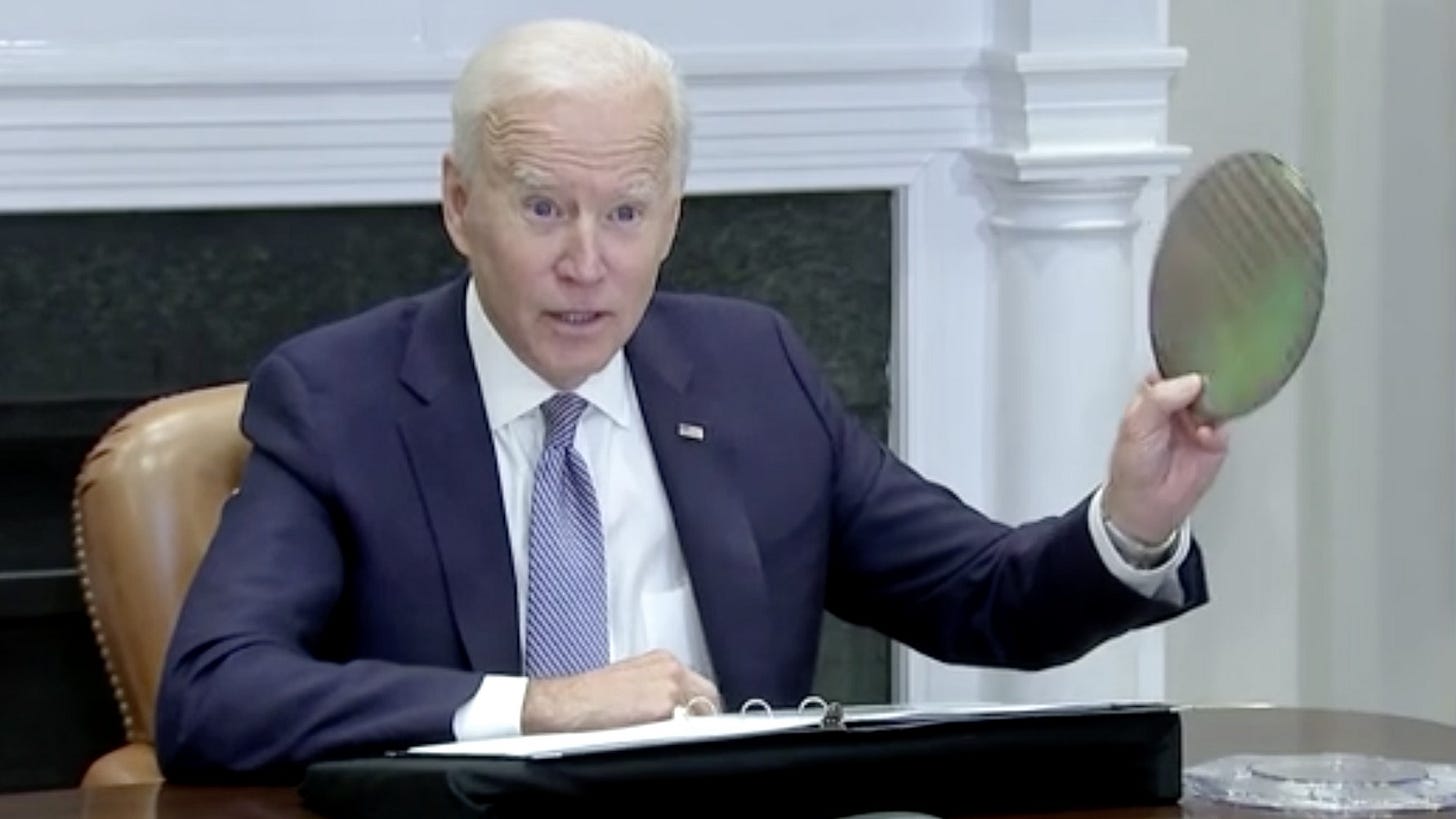

This idea, that the economy continues to speed up until our requirement that we be meaningfully in the loop supervising it becomes untenable, seems pretty plausible to me. This is a bit similar, I imagine, to the situation that many Americans, and consumers generally, already find themselves in today. They enjoy using new VR headsets, TikTok’s recommendation algorithm, driving in new cars, but they can’t remotely explain how any of these things were built, how they work, or in some cases even what types of skillset a human would need to make them (e.g. their understanding of “computer programming” as a discipline may even be fuzzy). As if software, aluminum manufacturing, and virtual reality engineering (see, even I don’t understand how that one works) weren’t complicated and abstract enough for them, imagine moving down a layer further to chip design, instruction set licensing, and wafer manufacturing. The areas that these consumers are oblivious to today are mastered by specialized humans, where soon they could be wholly created and practiced by general-purpose specialized machines.

Although it’s scary, I think if the above world is what we’re worried about, then the situation with AI safety isn’t quite as dire as I was feeling it was 6 years ago. I think it will take a lot of work for us to build precautions and guardrails as sophisticated as the ones Paul describes, but once we do it seems to me that increasing the input/output capacity of the human brain will become more important than people who worry about AI safety typically think of it as being. Paul’s story paints a portrait of a world where the economy is recursively self-improving while the human brain and body remain totally untouched, still slowly grinding along at the same speed they always have. I think in worlds with this much scientific advance, seeing so little progress on advanced brain computer-interfaces for scaling human judgement, full brain uploading, or the precursors to either of those two technologies seems a bit unlikely to me.

Anyway, just getting back into this stuff, and excited to learn more soon, which maybe I will share here!